GSOC - Handle Nested Languages Overview

— gsoc — 7 min read

Hola Amigos!

The Coding Phase - 1 of GSoC has started and it has been pretty fun. I also

have my University Finals going on now. They'll end on July 3rd. Banglore does

live in a different kind of world :P - Phew! This is a really busy month. I have

to manage Finals, GSoC and Open Mainframe Internship cocurrently. I like this

kind of atmosphere though. It keeps me on my toes and pushes me to break my own

boundaries and limits that I so absent mindedly set upon on myself. And it's

pretty damn fun.

There's a different craze - The adrenaline that rushes through your body, when

you put on your headphones, and sit infront of your laptop to code and learn

lot's of new stuff everyday. It's finger licking good (EWWW! You can come

with better exclamation Naveen :facepalm:). There has been a lot of Aha!

moments until now as well. And some moments where I felt like hitting Anne so

hard that all her teeths come out. Oh! For the unintiated - Anne is the name of

my Laptop. She's so fucking good. Especially when the red color light's her body

up. Alright! I won't go on about Anne more - Else it would get more intimate

:speak_no_evil: But all in all - Anne is a good girl and she's mine. So People!

Keep your hands of her - It's pretty well encrypted anyways - You won't be able

to get any fun just by laying your hands on it. Hehehe!

Ah! Here it is again. I shifted from the topic - And I'm too lazy to go back and erase it up. So I'll let that be there anyway.

Coming back to the point! This post is to introduce you(you - as in the bots

of the internet) about What my project is? and A brief Overview of the project.

The title of my project is Handle Nested languages.

The proposal for this project is present here

Introduction

coala in its present state is capable of providing efficient static analysis

to only those file that contains a single programming language. But Multiple

programming languages can coexist in a single source file.Eg: PHP and HTML ,

HTML and Jinja , Python and Jinja , codeblocks and RST etc.

coala does not yet support these kind of files. This project would enable coala to deal with those situations and allow people to write code analysis similar to how they already do it while being applicable to the right locations at the right files.The users of coala would not have to concentrate on writing new bears/ analysis routines. This implementation would perfectly work with the existing bears.

There can be several ways to approach this. In this cEP, we will be implementing a abstract way which will support arbitrary combination of languages.

A higher level view of the implementation

A source file containing multiple programming languages is loaded up by coala.

The original nested file is broken/split into n temporary files, where n is

the number of languages present in the original file. Each temporary file only

contains the snippets belonging to one language.

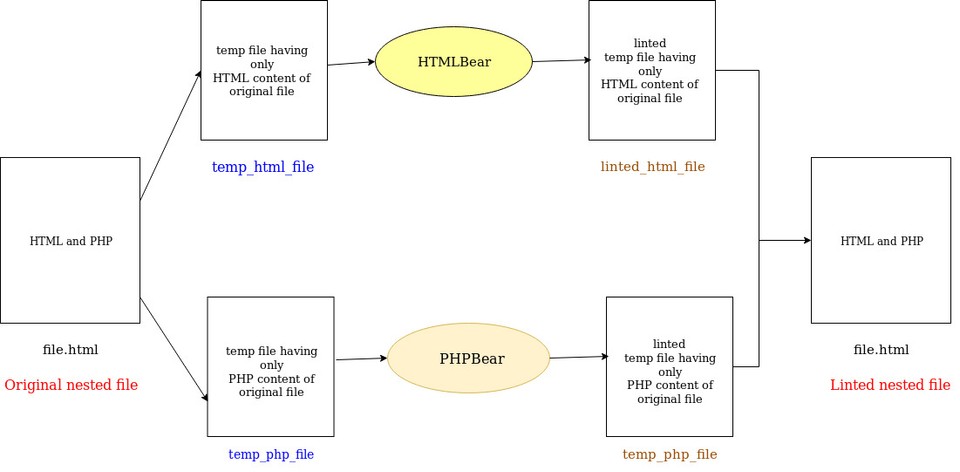

These temporary files would then be passed to their respective language bears ( chosen by the user) where the static analysis routines would run. The temporary files after being linted would then be assembled.

The above process creates the illusion that the original file is being linted, but in reality we divide the files into different parts and lint them one by one.

The figure below, would help give a clear picture

Nested Language Section (nl_section)

The original file is segregated into small pieces/units where each piece/unit

purely contains only one language. These quantum pieces of the the nested

original file is termed as nl_section.

A nl_section can further be defined as a group of lines in the source code that

purely belongs to one particular language.

All the nl_sections that belong to one particular language are grouped to form

a valid file of that programming language.

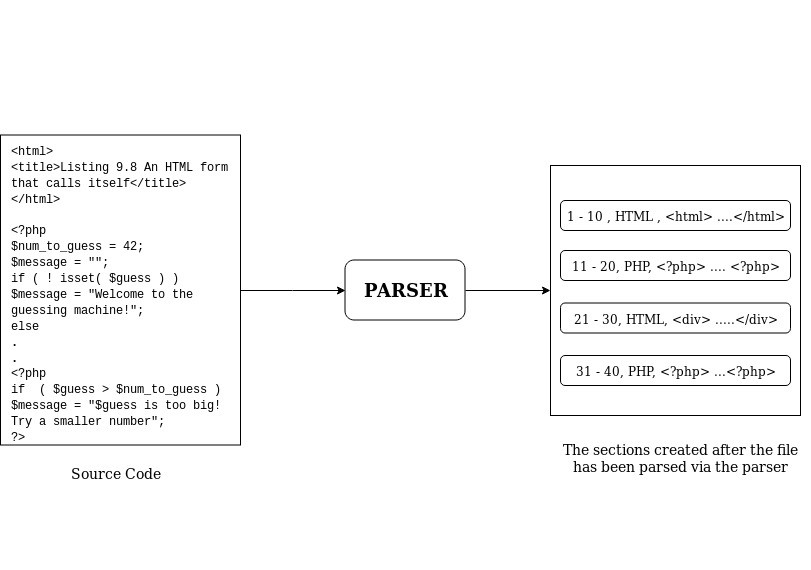

The following figure would make the things clear

In the above figure, we have a file which contains both HTML and PHP code. This original file can be broken up into 4 different sections.

Each nl_section will contain the following information:

- The Programming language of the lines

- Index of the section

- Starting Line number in the original file

- Ending Line number in the original file

- Starting line number in the linted file

- Ending line number in the linted file

Prototype of nl_section

1class NlSection(TextRange):2

3 def __init__(self,4 orig_start: NlSectionPosition,5 orig_end: (NlSectionPosition, None) = None,6 index=None,7 language=None):8 """9 Creates a new NlSection.10

11 :param orig_start: A NlSectionPosition indicating the start of the 12 section in original file.13 :param orig_end: A NlSectionPosition indicating the end of the 14 section in the original file.15 If ``None`` is given, the start object will be used16 here. end must be in the same file and be greater17 than start as negative ranges are not allowed.18 :param language: The programming language of the lines.19 :param index: The index of the nl_section.20 :raises TypeError: Raised when21 - start is not of type NlSectionPosition.22 - end is neither of type NlSectionPosition, nor 23 is it None.24 :raises ValueError: Raised when file of start and end mismatch.25 """26 TextRange.__init__(self, start, end)27 self.index = index28 self.language = language29

30 """31 :linted_start: The start of the section in the linted file.Initially it 32 is same as that of the start of the original file. It 33 changes only when any patches are applied on that line.34 :linted_end: The end of the section in the linted file.Initially it 35 is same as that of the end of the original file. It 36 changes only when any patches are applied on that line.37 """38 self.linted_start = start39 self.linted_end = end40

41 if self.start.file != self.end.file:42 raise ValueError('File of start and end position do not match.')43

44 @classmethod45 def from_values(cls,46 file,47 start_line=None,48 start_column=None,49 end_line=None,50 end_column=None,51 index=None,52 language=None):53 start = NlSectionPosition(file, start_line, start_column)54 if end_line or (end_column and end_column > start_column):55 end = NlSectionPosition(file, end_line if end_line else start_line,56 end_column)57 else:58 end = None59

60 return cls(start, end, index, language)Note

The implementation of the nested language architecture would be done inside the

a new folder called as nestedlib . This nestedlib would be present under

the coalib directory.

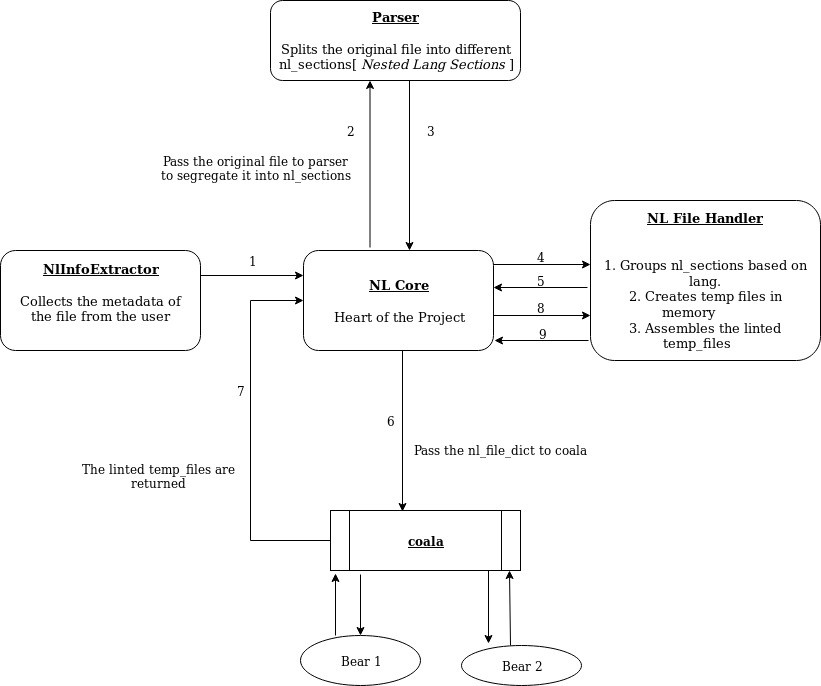

Architecture

The following figure depicts the architecture that is going to be implemented to enable nested languages support.

- NLCore

This is the heart of the project. It is responsible to manage the execution flow in the program

- NLInfoExtractor

NLInfoExtractor is responsible to extract the information from the user's input. The extracted information would include the languages present in the file, bears to run for each programming language and the settings of these bears.

- Parser

Parser splits up the original nested file into different nl_sections.

- NLFileHandler

NL FileHandler is responsible for creating the temporary files (which contains all the sections belonging to a single language) that would be passed for the actual analysis.

Implementation

This project will be implemented in four phases:

- Information Gathering

- Segregating the file

- Linting the file

- Assembling

Phase 1: Information Gathering

The users can inform coala about the presence of nested languages in the files

using the --handle-nested flag.

eg:

1coala --handle-nested --files=html_php_file.html --language=html,php --bears=HTMLBear,PHPBear--languages: Informs coala about the languages present in the file--bears: Informs coala about the bears to run on the file.

The aim of information gathering is to extract the following information from the arguments passed to coala:

- Languages present in the file

- Language and Bear association

- Argument list (would be used to create coala sections)

The languages present in the file can easily be gathered from the --language

tag. The language and Bear association information can be attained by using

the meta information of the bear and matching it with the --languages.

NlInfoExtractor is responsible for the above tasks.

The original nested language file is divided into various temporary in-memory files, where each file contains the snippets of one particular language. The linting needs to be done by the respective bears on each of the file.

In order to do so, we would have to create virtual coala sections for

each file.

Each section, would contain the information about the files to be linted, the

bears to run on those files and the setting to initialize the bear with. In

order to create these sections we would need the arguments to be passed to

the parse_cli() method. The arguments used to initialize coala in nested

language mode cannot be used to create the coala sections.

The NlInfoExtractor converts the original arguments into different argument list, where each argument list resembles the argument that a user would have passed if he was linting a single temp_file with the appropriate bears.

For eg:

1coala --handle-nested --file=html_php_file.html --language=html,php --bears=HTMLBear,PHPBeargets converted to:

1coala --file=temp_html_file --language=html --bears=HTMLBearand

1coala --file=temp_php_file --language=php --bears=PHPBearThe above arguments when passed to the parse_cli() method, creates two

section:

1[nl.html]2files = temp_html_file3bears = HTMLBear4setting = value5

6[nl.php]7files = temp_php_file8bears = PHPBear9setting = valueNlInfoExtractor.py Prototype

1def extract_info(args):2 """3 Return a dictionary called as `nl_info_dict` with all the extracted 4 information.5

6 Let's assume that we have the following arguments7

8 >>>args = coala --handle-nested --file=html_php_file.html --language=html,php --bears=HTMLBear,PHPBear9

10 >>> nl_info_dict = extract_info(args)11

12 >>> nl_info_dict13 {14 "file_name": html_php_file.html,15 "absolute_file_path": /home/test/html_php_file.html,16 "languages": [html, php]17 "bears": [HTMLBear, PHPBear]18 "language_bear_dict": language_bear_dict19 "arg_dict": arg_dict20 }21

22 >>> nl_info_dict['language_bear_dict']23 {24 "html": [HTMLBear],25 "php" : [PHPBear]26 }27

28 >>> nl_info_dict['arg_dict']29 {30 "nl.html": {"file_name": "temp_html_file",31 "bears": 'HTMLBear',32 "settings": [(setting,value)]33 }34 35 36 "nl.php": {"file_name": "temp_php_file",37 "bears": 'PHPBear',38 "settings": [(setting,value)]39 }40

41 }42

43

44 """45

46def get_lang_info(args):47 """Return a list of langauges present in the nested file"""48

49def get_bear_info(args):50 """Return the list of bears to be run on the file"""51

52def get_file_info(args):53 """Returns the list of files to be linted"""54

55def get_absolute_file_path(file_name):56 """Returns the absolute file path of eacg file"""57

58def get_language_bear_dict(languages, bears):59 """60 Return a language_bear dict, where each language is mapped to a set of bears61 """62

63def get_argument_dict(file_list, language_bear_dict, settings):64 """65 Returns a argument_dict, where each temp_file is mapped to a set of66 arguments.67 """different nl_sections and creating temporary files for each nested languages,

which would contain the snippets of that language from the original nested file.

This phase uses the Parser and the NlFileHandler parts of the architecture.

Phase 2: Segregation of Nested File

Parser

Parser is responsible for splitting the original file into different

nl_sections.The input and output to the parser is standardized, where the input to the parser are the contents of the original nested file and the output is the list of nl_sections encompassing the snippets of different programming language.

Standardizing the parser helps us in removing any restriction on how a parser should parse the contents. A parser can also use third party API's as long as the output from it is in accordance with the format of a nl_sections.

Since different combination of languages would need different parsers, we would create a new folder at

nestedlib/parserswhich would host the collection of all the parsers.A new super class called as

Parserwould be created. This would have all the common methods that all the parser might need. All the parser would be derived from this Superclass.

Parser.py Prototype

1import coalib.nestedlib.NlSection2

3class Parser:4

5 def parse(file_contents):6 '''7 Returns a list of nl_sections.8

9 :param file_contents: The contents of the original nested file10

11 >>> file_contents = \12 """13 <!DOCTYPE html>14 <head>15 <title>Hello world as text</title>16 <?php17 echo "<p>Hello world</p>";18 ?>19 </html>20 """21

22 >>> p = Parser()23 >>> nl_sections = p.parse(file_contents)24 >>> nl_sections25 ( <NlSection object(index=1, language=html, orig_start=1, orig_end=3)>,26 <NlSection object(index=2, language=php, orig_start=4, orig_end=6)>,27 <NlSection object(index=3, language=html, orig_start=7, orig_end=7)>28 )29 '''30

31 def detect_language(str):32 """33 Return the language of the particulat string.34

35 This will be overridden by the child class36 """37

38 def create_nl_section(start, end, language, index):39 """40 Creates a NlSection Object from the values.41

42 Returns the NlSection object43 """44

45 return NlSection.from_values(start=start, end=end, language=language,46 index=index)Making temporary files

This sections deals with how the segregated section (nl_sections) from the parser are combined to form different temporary files on which linting will be done.

NlFileHandler is responsible to create the temporary files. These temporary

files are stored in the memory.

The nl_sections are passed to NlFileHandler.

NlFileHander then creates a

nl_file_dict, where the key is the name of the temporary file and the contents are it's value. It is similar to thefile_dictthat is created by coala ininstantiate_process()during the execution of the section.It is important to keep in mind, that the temporary segregated file are not actually present in the folder. In the normal flow of coala,during the execution of the coala sections, coala will try to find the files by the filenames mentioned in the coala section. And then create a

file_dict. That cannot happen in our case. So we will explicitly replace thefile_dictwithnl_file_dict.

NlFileHandler.py Prototype

1def get_nl_file_dict(nl_file_info, nl_sections):2 """3 Returns a nl_file_dict, where the key is the name of the temporary file4 and the value is the contents of that file.5

6 :param nl_file_info: The information extracted from the arguments.7 This is generated by the NlInfoExtractor.8 :param nl_sections: The segregated sections of the original file.9

10 >>> nl_file_info11 {12 "file_name": html_php_file.html,13 "absolute_file_path": /home/test/html_php_file.html,14 "languages": [html, php]15 "bears": [HTMLBear, PHPBear]16 "language_bear_dict": language_bear_dict17 "arg_dict": arg_dict18 }19

20 >>> nl_sections21 ( <NlSection object(index=1, language=html, orig_start=1, orig_end=3)>,22 <NlSection object(index=2, language=php, orig_start=4, orig_end=6)>,23 <NlSection object(index=3, language=html, orig_start=7, orig_end=7)>24 )25

26 >>> file_contents = get_file(nl_file_info['file_name'])27

28 >>> file_contents = \29 '''30 <!DOCTYPE html>31 <head>32 <title>Hello world as text</title>33 <?php34 echo "<p>Hello world</p>";35 ?>36 </html>37 '''38

39 >>> nl_file_dict = get_nl_file_dict(nl_file_info, nl_sections)40 >>> nl_file_dict41 {42 "temp_html_file":('''<!DOCTYPE html>\n43 <head>\n 44 <title>Hello world as text</title>\n 45 \n46 \n 47 \n 48 </html>'''),49

50 "temp_php_file":('''\n51 \n52 \n53 \t\t<?php\n54 \t\t\techo "<p>Hello world</p>";\n55 \t?>''')56

57

58 }59

60 """61

62def get_file_contents(file_name):63 """ Returns the contents of the file"""64

65def assemble(nl_diff_dict, nl_sections, nl_info_dict):66 """67 Assembles the temporary files. 68

69 The sections are extracted by their increasing order of their index and 70 then written directly to the original file.71 """72 file = open(nl_info_dict['absolute_file_path'], 'w')73

74 for nl_section in sorted(nl_sections.index):75 temp_file_name = nl_section.temp_file76 start = nl_section.linted_start77 end = nl_section.linted_end78 linted_file_content = nl_diff_dict[temp_file_name]79

80 section_content = get_file_content(linted_file_content, start, end)81

82 file.write(section_content)Phase 3: Linting the file

This phase deals with linting the temporary files created by the NlFileHandler using

the nl_sections.

In coala the linting of the files are done when the section created by coala are executed.

Since we are explicitly making the sections for nested languages, care has been taken to keep the file names of the

nl.langsection and the file names innl_file_dictsame.During the execution of the section, in the

instantiate_process()we do not access the physical file, rather we replace thefile_dictwithnl_file_dictand make the necessary changes.Once the

file_dictis changed, coala will normally continue the process. To coala, it now looks as if thetemporary fileswere actually present in the physical drive. And the linting starts

Applying the Patches

Whenever the bears suggests a patch and the user desires to apply the Patch, we

would also need to update the information of the linted_start and

linted_end. This needs to be done because, whenever a patch is applied the

position of the lines might change because of addition and deletion of lines.

Keeping track of the start and end of a particular nl_section in the linted

files would help in easier extraction of the nl_section from the temporary

created files.

In order to do so, we'll have to make changes to the apply() method of

the ApplyAction. We use the update_nl_sections() functions to update

the values.

In Diff.py we add a new function get_diff_info() that would give us the

information of the diff

Diff.py

1def get_diff_info():2 """3 Returns tuple containing line numbers of deleted,changed and added lines.4 """5 deleted_lines = []6 added_lines = []7 changed_lines = []8

9 for line_nr in self._changes:10 line_diff = self._changes[line_nr]11

12 if line_diff.change:13 changed_lines.append(line_nr)14 elif line_diff.delete:15 deleted_lines.append(line_nr)16 if line_diff.add_after:17 added_lines.append(line_nr)18

19 return changed_lines, deleted_lines, added_linesNLCore.py

The following method belongs to the NlCore

1from coalib.result_action.Diff import stats,get_diff_info2

3diff_stats = stats()4diff_info = get_diff_info()5

6def find_section_index(diff_stats, diff_info, nl_sections):7 """8 Returns the section index to which the patch is about to be applied.9 """10

11 return index12

13def update_nl_sections(diff_stats, diff_info, nl_sections):14 """15 Updates the `linted_start` and the `linted_end` of the nl_sections 16 """17

18 index = find_section_index(diff_stats, diff_info, nl_sections)19

20 for nl_sections in nl_sections:21 if nl_section.index == index:22 """23 Update the values of linted_start` and the `linted_end`24 """Phase 4: Assembling

This phase deals with assembling the linted temporary files back into the original file.

This phase has two parts:

- Extracting the sections from the linted temporary files.

- Assembling these sections.

Once all the coala sections have been executed, we have a nl_diff_dict where

the key is the name of the temporary file and the value is the contents of the

linted file contents of the temporary file.

We have a assemble() method inside the NlFileHandler, which uses

the information from the nl_sections and extracts the sections from the

nl_diff_dict and write it to the original file

1def assemble(nl_diff_dict, nl_sections, nl_info_dict):2 """3 Assembles the temporary files. 4

5 The sections are extracted by their increasing order of their index and 6 then written directly to the original file.7 """8 file = open(nl_info_dict['absolute_file_path'], 'w')9

10 for nl_section in sorted(nl_sections.index):11 temp_file_name = nl_section.temp_file12 start = nl_section.linted_start13 end = nl_section.linted_end14 linted_file_content = nl_diff_dict[temp_file_name]15

16 section_content = get_file_content(linted_file_content, start, end)17

18 file.write(section_content)NlCore.py Prototype

1from coalib.nestedlib.NlInfoExtractor import extract_info2from coalib.result_action.Diff import stats,get_diff_info3from coalib.nestedlib.NlFileHandler import get_file_contents, get_nl_file_dict, assemble4

5import coalib.nestedlib.Parser6

7def get_arg_info(arg):8 """9 Return argument list and nl_info_dict that will be used to make 10 the coala sections11

12 >>> arg_list = get_arg_list(arg)13 [ ( (file, temp_html_file), (bears, HTMLBear ), (settings, value) ),14 ( (file, temp_php_file), (bears, PHPBear ), (settings, value) )15 ]16

17 """18 nl_info_dict = extract_info(arg)19 arg_list = make_arg_list(nl_info_dict)20

21 return nl_info_dict, arg_list22

23def get_file_metadata(nl_info_dict):24 """25 Returns the nl_sections and nl_file_dict26 """27

28 parser = detect_parser(nl_info_dict['languages'])29 file_contents = get_file_contents(nl_info_dict['absolute_file_path'])30

31 index = find_section_index(diff_stats, diff_info, nl_sections)32

33 for nl_sections in nl_sections:34 if nl_section.index == index:35 """36 Update the values of linted_start` and the `linted_end`37 """38

39

40def assemble_files(nl_diff_dict, nl_sections, nl_info_dict):41 """42 Assembles the file and returns back to coala_main43 """44

45 return assemble(nl_diff_dict, nl_sections, nl_info_dict)Changes in coala.py

1import coalib.nestedlib.Nlcore2def main(debug=False):3 configure_logging()4 handle_nested = True5

6 args = None # to have args variable in except block 7 # when parse_args fails8 try:9 args = default_arg_parser().parse_args()10 if args.handle_nested:11 nl_info_dict, nl_arg_dict = get_arg_info(args)12 nl_sections, nl_file_dict = get_file_metadata(nl_info_dict)13

14 """15 16 Code Contents of of coala.py17

18 """19

20 return mode_normal(console_printer, None, args, debug=debug, 21 handle_nested, nl_info_dict, nl_arg_dict, 22 nl_sections, nl_file_dict)That's it for now folk. Will update you soon as soon as I complete all my tasks of Coding Phase 1. All the best to me :grimacing: